AI Judge: The Future of Fairness?

As legal systems confront growing case backlogs and procedural delays, artificial intelligence is increasingly proposed as a solution to improve efficiency and consistency in sentencing. Automated systems are often framed as objective alternatives to human discretion, positioned as capable of reducing bias rather than reproducing it.

AI Judges: The Future of Fairness responds to this narrative of technological solutionism. It explores what it might mean to introduce AI judges into courtrooms not as distant speculation, but as a tangible and embodied experience.

Central Question

If artificial intelligence were to assist in sentencing decisions, what would engaging with such a system actually feel like?

The Work

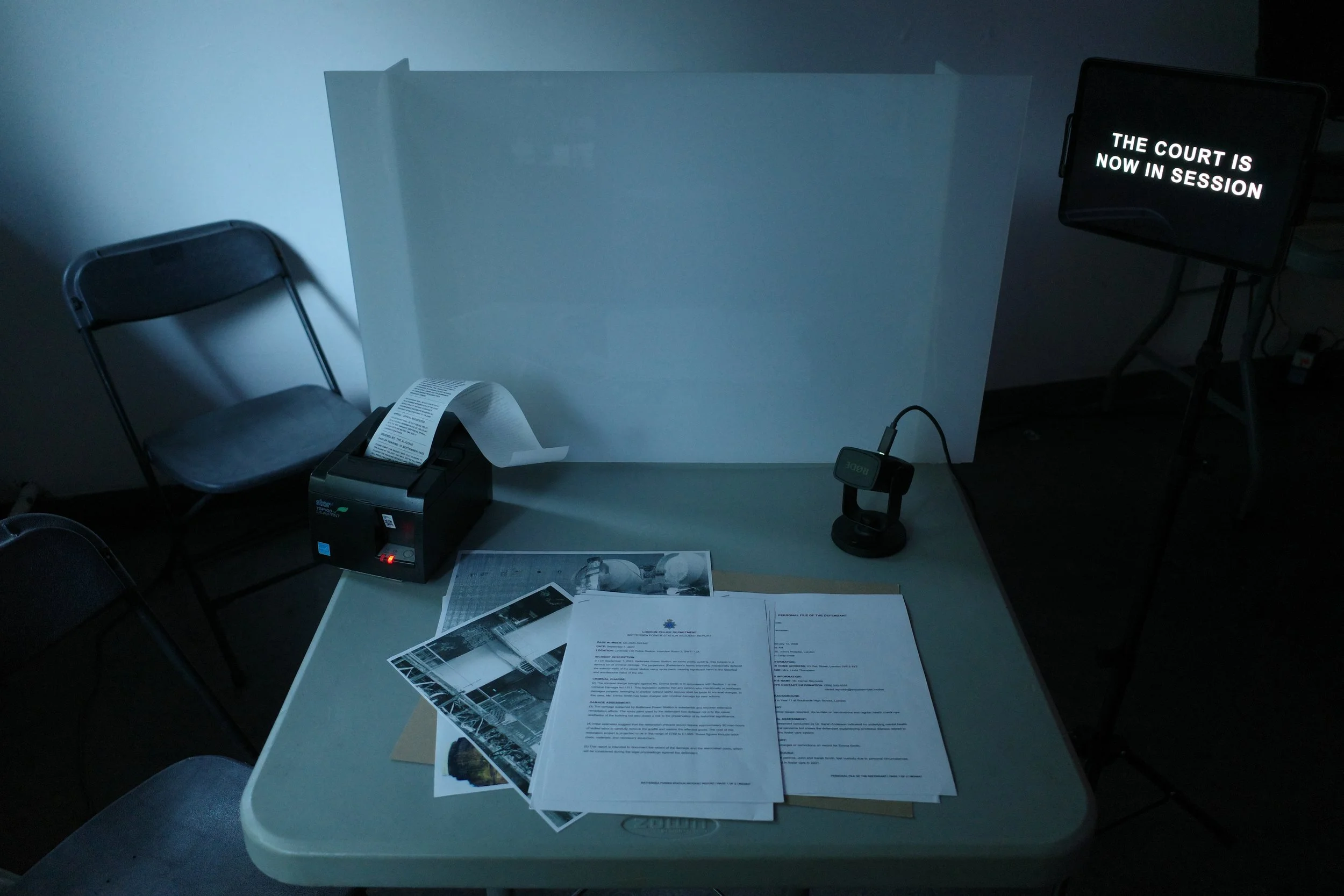

The project takes the form of an interactive courtroom performance in which audience members assume the role of defendants. Within this speculative setting, an AI judge presides over proceedings, delivering sentencing outcomes through structured inputs and procedural logic.

The system operates through a Wizard of Oz setup. While participants engage with what appears to be an AI judge, the decisions are enacted by a human operator positioned visibly behind a barrier. Judgment is therefore both performed and witnessed. The voice of neutrality has an author.

This visibility is intentional. It exposes how claims of algorithmic objectivity are shaped by human design, training data, and institutional values. In the performance, my own bias is made present, underscoring that AI systems are extensions of their creators rather than autonomous arbiters of fairness.

The courtroom setting reinforces the aesthetics of neutrality. Formal language, structured procedure, and spatial hierarchy contribute to the sense of legitimacy. Participants encounter the tension between computational reasoning and lived complexity, between efficiency and discretion.

Why This Form

The courtroom was chosen deliberately as a physical site of authority. Legal systems operate not only through rules, but through space, ritual, and hierarchy. The architecture of the courtroom, from elevated benches to controlled speech, reinforces legitimacy through embodied experience. Staging the project in a physical courtroom rather than a digital interface foregrounds how authority is constructed spatially. A website would frame the AI judge as a tool. The courtroom situates it within an existing structure of power.

The interactive performance format creates a bridge between abstract policy debate and lived encounter. Rather than asking audiences to imagine the implications of AI-assisted justice, the work places them inside the system. Participants do not observe a future scenario, they inhabit it.

The Wizard of Oz structure further destabilises the illusion of autonomy. By making the human operator visible behind the barrier, the work highlights how algorithmic neutrality is always mediated by human authorship. The AI judge becomes less a machine and more a reflection of its creator.

The presence of visible human oversight complicates binary narratives of technological optimism or dystopia. Instead of asking whether AI judges should or should not exist, the work examines how authority is constructed, transferred, and obscured within hybrid systems.

What the Project Reveals

AI Judges does not argue that algorithmic systems are inherently more or less biased than human judges. Instead, it examines how neutrality is constructed through interface, procedure, and institutional framing. Consistency, data-driven reasoning, and structured outputs can produce the appearance of fairness without addressing the assumptions embedded within design and training.

The project also interrogates the widespread assumption that efficiency signals improvement. In discussions around legal backlogs, speed is often framed as progress. Automated systems promise faster processing and streamlined decision-making. Yet speed does not necessarily deepen understanding or expand fairness. It can compress nuance, standardise complexity, and reinforce patterns already present in the data. When efficiency becomes the primary measure of success, justice risks being redefined as throughput rather than care.

The Wizard of Oz structure makes visible what is often obscured in real-world systems. Even when framed as artificial intelligence, judgment remains authored. In practice, responsibility is distributed across designers, institutions, and training data. What changes is not the presence of bias, but how and where it is embedded.

Rather than resolving whether AI judges are desirable or dangerous, the work shifts attention to the conditions under which such systems are accepted. When neutrality is convincingly staged and efficiency is presented as progress, institutional trust can form quickly. The performance of objectivity may be enough to stabilise belief, even when its foundations remain contested.

By making the operator visible, the project asks a broader question: when bias is embedded within code, who is recognised as its author? And when neutrality is performed convincingly enough, does it matter whether the system is human, machine, or something in between?